Crisis resolution and home treatment (CRHT) teams were introduced in England from 2000/01 with a view to providing intensive home-based care for individuals in crisis as an alternative to hospital treatment, acting as gatekeepers within the mental healthcare pathway, and allowing for a reduction in bed use and in-patient admissions. They are also supposed to reduce out-of-area treatments and support earlier discharge. 1,2 The Department of Health set targets for mental health providers in terms of the number of CRHT teams to put in place and their activity rates and the achievement of these targets have been strongly incentivised through the Care Quality Commission's performance management regulatory regime. 3 The performance target report in 2007 showed the creation of 343 standard CRHT teams, but attainment of only 95% of the national target number of home treatment episodes. 4 The drive to introduce CRHT teams and potentially take away resources from existing community mental health teams has in part been driven by evidence on the effectiveness of CRHT teams in reducing admissions. Reference Johnson, Needle, Bindman and Thornicroft5

Johnson and colleagues reported results of a before and after study and a randomised controlled trial of a CRHT team based in an inner-London setting. These studies found a lower probability of being admitted to hospital within 8 weeks after the crisis and reductions in admission rates from 71 to 49% in the 6 weeks after the crisis. Reference Johnson, Nolan, Hoult, White, Bebbington and Sandor6,Reference Johnson, Nolan, Pilling, Sandor, Hoult and McKenzie7 However, these studies may suffer from a lack of generalisability to the rest of the country.

One national research paper explores the reduction in hospital admissions associated with CRHT services, Reference Glover, Arts and Babu8 using an uncontrolled observational study of trends in hospital admissions at primary care trust (PCT) level across England. They used analysis of variance to test the difference in mean admission values for the change from the first to the last 2-year period between 1998/99 and 2003/04. They found an association between CRHT availability and declining trends in psychiatric hospital admissions in England over the period of analysis. They found that the difference in mean fall in hospital admissions, between PCTs with CRHT teams introduced by 2001 and those with no teams by 2003, vary substantially in terms of age/gender population, the length of time the team had been available and using broad and narrow team definitions. Reference Glover, Arts and Babu8,Reference Glover, Arts and Babu9

There are a number of potential limitations to this methodology. First, there may be long-standing differences in the admissions between PCTs. The difference in the mean fall in admissions between PCTs with CRHT teams introduced by 2001 and those with no teams by 2003 cannot capture the real impact of the implementation of CRHT teams on admissions, since these differences might exist before the introduction of CRHT. Also, some unobservable characteristics might encourage more ‘enthusiastic’ or ‘entrepreneurial’ providers to take up CRHT. This effect might be even more important when considering the timing of providers implementing teams. Thus a control group is needed to evaluate the introduction of this policy and the method needs to consider the potential for self-selection bias and strategic behaviour of providers taking up CRHT. Ideally, we also need to simultaneously control for all confounding factors that can explain the differences between PCTs with CRHT teams and those without, and factors that may affect all PCTs over the time period considered, not just those with CRHT teams, something which this methodology was unable to do.

Method

When examining the impact of a policy, the challenge is to determine whether the observed changes over time are attributable to the policy. A method for examining this is to compare the outcomes of the group that is subject to the intervention (the treatment group) with a group that is not subject to the intervention (the control group). In measuring the effect of healthcare interventions, this evaluation problem is most commonly solved by using an experiment in the form of a trial. Such experiments, whether randomised or not, are however rare in the field of health policy.

In order to examine the impact of the CRHT policy on admissions over time we therefore construct a quasi-experiment using naturally occurring control groups. Ideally, the control group will be the same as the treatment group in every aspect except for the change in policy. In practice however, differences usually exist between the two groups in observed and unobserved characteristics. A less onerous assumption therefore is that in the absence of the policy change the unobserved differences between the two groups are the same over time. Thus although differences may exist between the two groups, provided these differences are time invariant, these can be ‘netted’ out. Difference-in-difference analysis is a commonly used empirical technique to evaluate the impact of policy that uses this assumption and is similar to a controlled before and after study but in a multivariate context. Reference Blundell and Costa Dias10

We used this technique to estimate the average change in admissions in the treatment and control groups before and after the introduction of CRHT teams and hence to estimate the average effect of the CRHT policy. The absolute differences between the treatment and control group are not important, it is the difference in differences, or the differences in the changes over time, that are the subject of analysis. Thus the method strips out any potentially unobserved confounding differences in the control and treatment groups that are fixed over time. The analysis also controls for differences at baseline.

We therefore identified the impact of the introduction of CRHT as a policy intervention in 2000/01 on trends in admissions. Our methodology considers the introduction of CRHT teams as an experiment and compares the change in admissions for PCTs with CRHT teams before and after the policy intervention with the change in admissions for PCTs in a comparator group that is not undergoing the intervention, over the same period. This allowed us to isolate the impact on admissions by looking at the difference in admissions between PCTs that introduced teams (the treatment group) and those that did not (the control group), before and after the introduction of the CRHT policy while controlling for characteristics of the teams and other factors affecting admissions. This methodology has not been used in this context before and is shown inFig. 1.

We compared PCTs with CRHT teams (95 PCTs) to two groups of PCTs without CRHT teams: all PCTs without CRHT teams in England (134 PCTs); and a group of PCTs without CRHT teams that were statistically matched to have the same characteristics as PCTs with CRHT teams (120 PCTs). We also tested whether there was strategic behaviour in the adoption of CRHT status Reference Blundell and Costa Dias10 by examining the introduction of CRHT status in waves: a group of 17 PCTs that acquired CRHT teams in 2000/01 (wave one); a group of 10 PCTs that acquired CRHT teams in 2001/02 (wave two); a group of 12 PCTs that acquired CRHT teams in 2002/03

Fig. 1 Graphical representation of the difference-indifference methodology. CRHT, crisis resolution and home treatment.

(wave three); and a group of 56 PCTs that acquired CRHT teams in 2003/04 (wave four).

As mentioned, this method isolates the average effect of the policy reform by removing unobservable PCTeffects and common trends. However, it relies on two important assumptions: a common time trend across groups and random assignment to the treatment group. Reference Blundell and Costa Dias10,Reference Heckman, Ichimura, Smith and Todd11 Because of this we used a matching method to correct for selection effects into the treatment group. The propensity score matching method evaluates pre-treatment characteristics of each PCT computing a single propensity score that is the conditional probability of being assigned to the treatment group given pre-treatment characteristics. The idea is to mimic the properties of the properly designed experimental context with a statistically strong match between treated and non-treated PCTs based on their observable characteristics. Reference D'Agostino12,Reference Becker and Ichino13 We used a logit model for the propensity to be a treated PCT and matched the treated PCTs with a subset of PCTs in the rest of England comparator group in the pre-treatment year (1999/2000) on the basis of observable characteristics.

We included time dummies to control for all unobserved temporal effects affecting hospital admissions. It is assumed that temporal factors influencing hospital admissions have the same effects for treated PCTs and non-treated PCTs. We therefore used an overall dummy variable for PCTs with CRHT teams, a set of time dummies and the interaction effect between these two sets of dummies, taking the value of one for those PCTs with a CRHT team only in those time periods when they have a team.

We used three panel-data estimation techniques: pooled ordinary least squares, fixed-effects and a population-averaged panel-data model, equivalent to a random-effects model. We ran ordinary least squares and fixed-effects clustering on PCTs to allow for within-group (PCT) correlation of the errors. The fixed-effects models do not provide an estimate of the overall CRHT effect and only include time-varying covariates. These estimates are less likely to present bias than random-effects estimates but the standard errors may be less efficient because of using only within-group variation. Reference Wooldrigde14,Reference Cameron and Pravin15 We used robust standard errors in all models to allow for heterogeneity and autocorrelation. In the case of the random-effects model, semi-robust standard errors were estimated. In the random-effects model information about variation within-PCT as well as between-PCT is considered, allowing for a more efficient estimator. We included the mean of the time-varying variables and their deviation from the mean, known as the Mundlak adjustment. Reference Mundlak16 Variance-inflation factors were used to test for multicollinearity between explanatory variables and we dropped variables if there was evidence of collinearity. A regression specification error test (RESET) Reference Ramsey17 for misspecification was performed for all models.

Data

We re-analysed data from the Glover et al study Reference Glover, Arts and Babu8 that covers PCT-level panel data for 6 years starting in 1998/99 with 2 years prior to the introduction of CRHT teams and 4 years post policy implementation. Admissions data came from hospital episode statistics and details of CRHT teams came from annual mental health service mapping data. These included model fidelity characteristics such as the date when the team was introduced, and the availability and service characteristics of the teams. Reference Glover, Arts and Babu8,Reference Glover, Arts and Babu9 Covariates included age-specific population size and the Department of Health's allocation of resources to English areas (AREA) mental health needs index. Reference Sutton, Gravelle, Morris, Leyland, Windmeijer and Dibben18 Data cleaning for this study resulted in a number of PCTs being omitted from the total of 303 PCTs. These were as a result of PCT boundary changes preventing trend analysis, poor coverage of gender in admissions data and discontinuities in admission numbers. This resulted in a final sample of 229 PCTs for analysis, of which 69 had one smoothed data-point in their admission data. Reference Glover, Arts and Babu8

We supplemented this data-set with the 2002 hospital episode statistics for PCT characteristics. This data covers a large number of variables on performance, staffing, expenditure and resource use and was used to run the propensity score matching and logit model.

One of the advantages of the difference-in-difference method is that all covariates can be introduced simultaneously and hence many more factors can be examined concurrently. A large number of different covariates were tested in the difference-in-difference models, including variables on region (rurality), population density, age, gender, three different composite needs indexes (one a population-adjusted age and needs index, the second the AREA needs index Reference Sutton, Gravelle, Morris, Leyland, Windmeijer and Dibben18 and the third the index of multiple deprivation (IMD)), other measures of morbidity such as expenditure on drug misuse and alcoholism day cases, ICD code (F2 including schizophrenia, schizotypal and delusional disorders and F3 including mood affective disorders) 19 and fidelity criteria on CRHT services such as multidisciplinarity of the team, daily availability, contact frequency with service users, intensity of provision in a short period of time and involvement until the problem is resolved, Reference Johnson, Needle, Bindman and Thornicroft5,Reference Glover, Arts and Babu9,20 as well as hospital episode statistics, general medical statistics and annual service mapping data. Results were stable to various covariates, but we present the models that provided the best specification results in terms of plausibility, significance and model fit.

Results

The propensity score matching method produced a control group of 120 PCTs based on the matching model in the pre-treatment year 1999/2000. The logit model (available from the author on request) was robust to various covariates, but the final model suggests that PCTs are more likely to introduce CRHT teams if they have greater mental health needs, higher psychiatric expenditure but less expenditure on medical staff. London was also less likely to get a CRHT team.

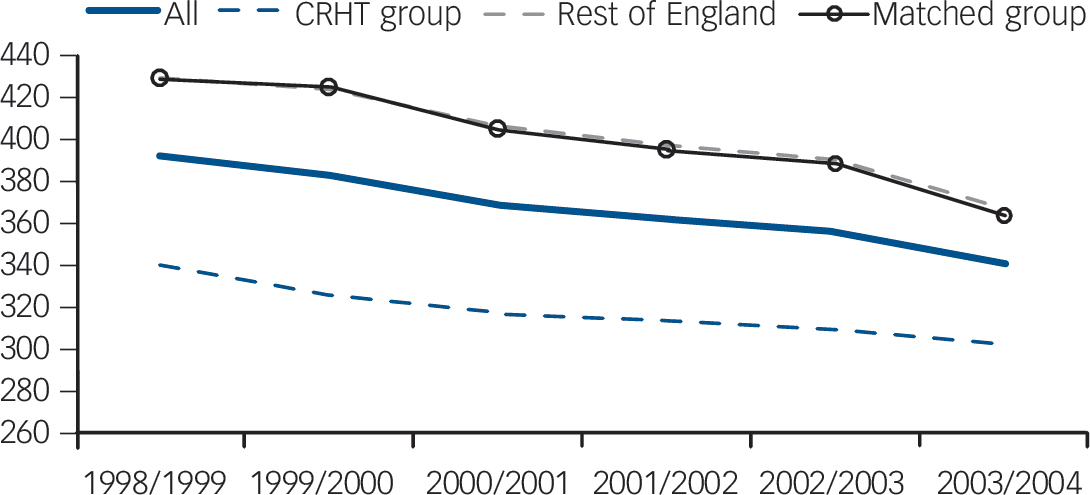

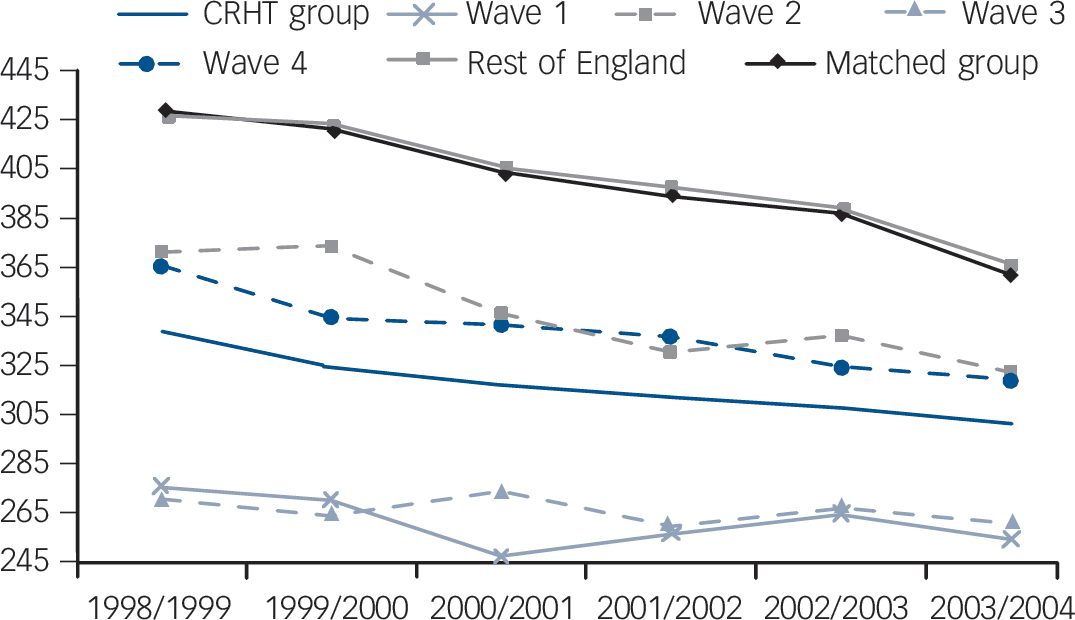

Figure 2 shows that mean admissions for the treatment and control groups have steadily fallen over the 6 years. Trends in admissions for the two control groups were very similar. We were interested in whether the overall decrease was significantly greater for the CRHT group after the policy implementation in 2000/01, relative to the two control groups, rest of England and the matched control.Figure 3 shows the mean admissions for PCTs with CRHT teams over time by wave. All four waves show on

Fig. 2 Plot of mean admissions for all primary care trusts (PCTs) in England, PCTs with crisis resolution and home treatment (CRHT) teams and PCTs without CRHT teams: rest of England and matched group: 1998/99-2003/04.

Fig. 3 Plot of mean admissions by wave of crisis resolution and home treatment (CRHT) introduction for primary care trusts (PCTs) with CRHT teams: 1998/99-2003/04.

average a declining trend in mean admissions; however, wave one and two PCTs show slightly more prominent declines with the introduction of CRHT teams in 2000/01, levelling out again in subsequent years.

The regression results and coefficients on the covariates for the difference-in-difference models for the overall effect of CRHT status on admissions relative to the two comparator groups for each of the estimation methods is shown in online Table DS1. Results are remarkably consistent across the different estimation methods and for the two comparator groups. Models pass all tests for specification and show no multicollinearity. The overall CRHT effect suggests lower admissions for PCTs with CRHT of around 37 admissions but this difference is not significant. The time trends show that for most years there are significant reductions in admissions over time relative to 1998/99 for fixed- and random-effects models of up to about 65 admissions in the year 2003/04. The next coefficients show the interaction between CRHT status and year trends, picking up differences for PCTs with CRHT teams over and above the overall year trends, and showing that these are generally not significant except for the random-effects results for 2003/04. Random-effects estimations show that for the covariates, admissions are higher with the presence of a multidisciplinary CRHT team, a lower proportion of F2-related admissions (schizophrenia and delusional disorders), longer average length of stay in hospital, better performance in the star-rating system of the Healthcare Commission and lower mental illness in-patient days. In addition, ordinary least squares results suggest that admissions are lower with more intensive contact over a short period of time with the CRHT team, are higher with higher capital asset expenditure and with a lower population-adjusted age and needs index. We then estimated the difference-in-difference results (foot of online Table DS1) to test whether the interaction effects between CRHT status and year trends were different between the baseline year 1998/99 and subsequent years. For the CRHT policy to have had an effect in changing behaviour with respect to admissions, we would expect the difference in admissions to be negative and significant between the baseline year (pre-treatment year) and the treatment years (2000/01, 2001/02, 2002/03 and 2003/04) and not the other years. These show no significant results.

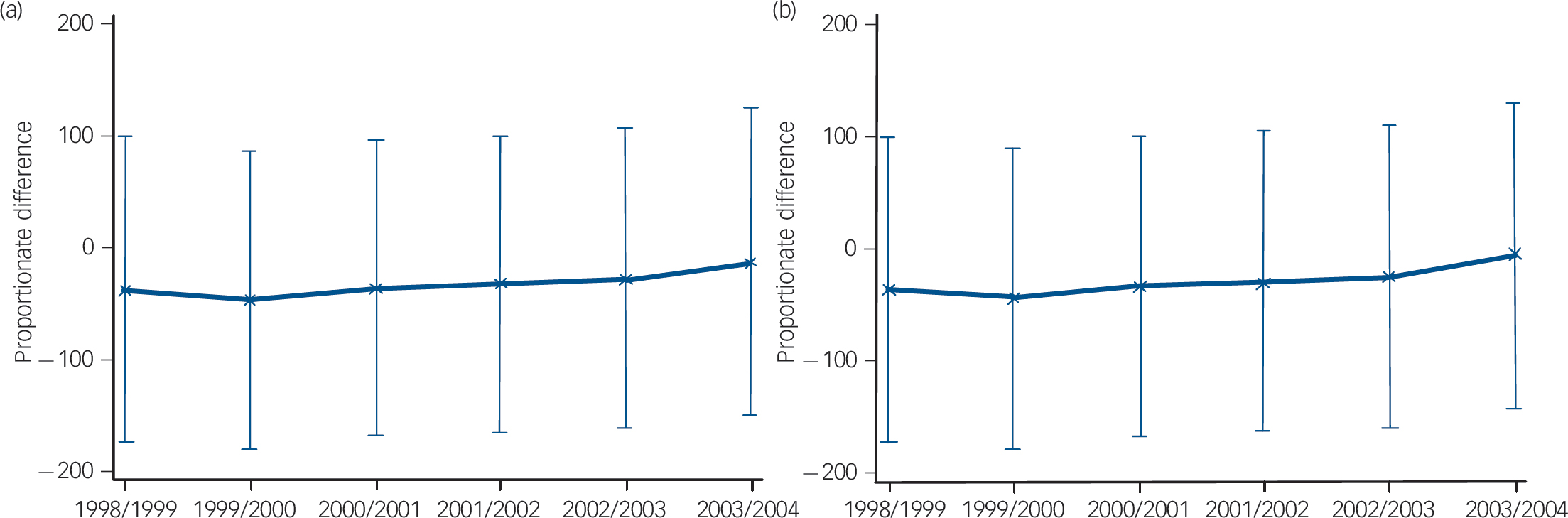

Figure 4 summarises the difference-in-difference results by showing the proportionate difference in admissions for PCTs with CRHT teams relative to each comparator group for each of the 6 years using random-effects estimations. Zero in this case represents the comparator group. Thus if the confidence intervals overlap zero, the proportionate change in admissions is not

Fig. 4 Proportionate difference in admissions between primary care trusts (PCTs) with and without crisis resolution and home treatment (CRHT) teams: (a) rest of England comparator; (b) matched group comparator: 1998/99-2003/04.

significant relative to the comparator group. These show lower admissions for the treated group in comparison with the non-treated one in both comparator groups across all years, however these differences are insignificant since the confidence intervals all overlap zero. In addition, the confidence intervals for each year are aligned (they overlap from one year to the next) suggesting that the differences over time are also not significant. Thus the policy intervention of introducing CRHT teams in 2000/01 was not responsible for a change in PCT behaviour with regard to reducing admissions; these were already lower for PCTs with CRHT teams, although not significantly. There has been no significant difference between admissions for PCTs with and without CRHT teams, either before or after the policy introduction. If anything the difference in difference has been diminishing over time.

Although not reported, we also carried out a difference-in-difference model for the wave analysis of the difference in policy intervention between waves one, two, three and four and the control groups. The results are consistent with the overall analysis. The overall CRHT effect suggests significantly lower admissions for wave one PCTs only in the ordinary least squares results of around 127 admissions. Random-effects results were not significant. Wave three PCTs had lower admissions of around 93, although not significant in any of the models, and wave four

Table 1 Summary of results, difference between primary care trusts with and without crisis resolution and home treatment (CRHT) teamsineachyear, by wave

| Wave 1 | Wave 2 | Wave 3 | Wave 4 | |

|---|---|---|---|---|

| Rest of England comparator | ||||

| Difference in 1998/99 | –29.277 | –4.991 | –94.216 | –23.714 |

| Difference in 1999/2000 | –129.662 | 1.563 | –96.279 | –39.636 |

| Difference in 2000/01 | –136.685* | –8.314 | –68.006 | –23.455 |

| Difference in 2001/02 | –114.316 | –17.386 | –73.723 | –20.904 |

| Difference in 2002/03 | –98.631 | –0.432 | –58.983 | –23.079 |

| Difference in 2003/04 | –86.978 | 6.498 | –41.22 | –5.140 |

| Matched group comparator | ||||

| Difference in 1998/99 | –129.002 | –4.979 | –93.714 | –22.801 |

| Difference in 1999/2000 | –128.214 | 2.776 | –94.590 | –37.547 |

| Difference in 2000/01 | –134.246 | –6.154 | –65.399 | –20.485 |

| Difference in 2001/02 | –111.599 | –14.715 | –70.706 | –17.441 |

| Difference in 2002/03 | –97.352 | 0.717 | –57.385 | –21.161 |

| Difference in 2003/04 | –82.574 | 10.775 | –36.548 | –0.120 |

* Significant at 10%.

PCTs had lower admissions of around 23 admissions, again not significant, whereas wave two PCTs were only around 3 admissions lower and not significant. The time trends showed that for most years there were significant reductions in admissions relative to 1998/99 for fixed- and random-effects models of up to about 65 admissions in 2003/04. The coefficients for the interactions between CRHT waves and year trends were again almost always insignificant. The covariates for the models were again consistent with previous results but in addition showed significant results in the ordinary least squares models for higher admissions associated with CRHT being available 24 h a day, 7 days a week.

We summarised the difference-in-difference results for the wave analysis using random effects estimations inTable 1 to test whether the interaction effects between CRHT waves and year trends were different between the baseline year 1998/99 and subsequent years. The proportionate differences were all not significant, with the exception of wave one in respect to the difference between 2000/01 and the baseline year, although this was only in the rest of England comparator group and only at the 10% level. This is most likely to be the result of the drop in admissions for wave one PCTs in 2000/01.

Figure 5 summarises the difference-in-difference results for the wave analysis relative to both comparator groups for each of the

Fig. 5 Proportionate difference in admissions between primary care trusts (PCTs) with crisis resolution and home treatment (CRHT) teams (by wave of CRHT introduction) and PCTs without CRHT teams: (a) rest of England comparator; (b) matched group comparator; 1998/99-2003/04.

6 years also using the random-effects estimations. This shows again lower admissions for the different waves in comparison with both non-treated control groups, but with no significant differences.

Similarly to the descriptive plot (Fig. 3), wave one and three PCTs have the greatest proportionate difference in admissions relative to the control groups. They show lower admissions relative to both comparator groups across all years but results are not significant for any of the years, since the confidence intervals always overlap zero. Wave four PCTs also show proportionately lower admissions relative to the control groups, although less so than for wave one and three PCTs, but again these differences are not significant in any of the years. Similarly, wave two PCTs show no significant difference relative to comparator groups in any of the years. Again these results suggest that the policy intervention of introducing CRHT teams in 2000/01 was not responsible for a change in PCT behaviour with regard to reducing admissions for any of the waves of PCTs. There has been no significant difference between admissions for different waves of PCTs with CRHT teams and those without, either before or after the policy intervention. If anything, the proportionate differences between PCTs with different waves of CRHT teams and those without relative to both control groups is diminishing over time.

Discussion

Main findings

Past evidence on the comparative impact of CRHT teams on admissions has been based on comparisons of temporal changes that lack robustness. Simple comparisons of PCTs with CRHT teams before and after policy implementation or between PCTs with and without CRHT teams will not produce robust results because they will only take into account either cross-sectional differences (PCTs with CRHT teams versus PCTs without CRHT teams) or temporal differences (PCTs before the CRHT policy versus PCTs after the policy). A major challenge in policy evaluation of this type is to combine and evaluate the temporal and cross-sectional differences jointly.

We used a difference-in-difference methodology to overcome some of the potential methodological limitations of previous studies. This method allowed us to combine consideration of both temporal differences and cross-sectional differences jointly. Compared with simple comparisons, this joint comparison has the advantage of dealing with self-selection bias problems influencing policy evaluation (since PCTs self-select into the policy) and also in controlling for common time trends or changes affecting all PCTs (not just PCTs with CRHT teams) over the period the policy was introduced. The matching process also allowed us to simulate a randomised control study providing a very powerful way to evaluate policy effects.

This analysis suggests that the CRHT policy per se has not made a significant difference to admissions. There has been no significant difference between PCTs with CRHT teams and those without, either before or after the phased introduction of the CRHT policy. Although we found lower admissions for PCTs that have CRHT teams compared with PCTs that have not implemented the policy, this difference was never significant. In fact, we found a selection effect at work in as much as those PCTs with lower admissions were more likely to introduce the policy. The trends suggest that the relative differences between PCTs with CRHT teams and those without are diminishing.

The wave analysis confirms the results. Wave one PCTs seem to show lower admissions than other waves of PCTs, but results are not significant. All waves seem to show their proportionate differences in admissions reducing over time relative to the control groups.

Limitations and future research

The aim of CRHT services was to reduce in-patient admissions and bed occupancy, support earlier discharge from in-patient services and reduce out-of-area treatments (where a bed can only be found for a person outside the local National Health Service area). 21 Our study examined whether the CRHT policy has been effective in reducing admissions and found that there is no evidence that the CRHT policy per se has made any difference. We focused only on this one aspect of the policy and did not provide specific evidence on the effectiveness of the CRHT policy in other areas such as bed occupancy, earlier discharge or out-of-area treatments. We did not examine the impact of the CRHT policy in terms of user satisfaction, 21 the quality of care provided or the level of integration between providers in the care pathway. Nor did we provide evidence on the cost-effectiveness of CRHT teams, which has already been explored through an economic evaluation based on a randomised controlled trial. Reference McCrone, Johnson, Nolan, Pilling, Sandor and Hoult22 Crisis resolution and home treatment teams may be effective in other ways that are better evaluated through trials rather than with the analysis of secondary data. The overall CRHT policy package is a complex mix of different elements, all of which require careful evidence and scrutiny.

Our results concur with a recent case-control study by Tyrer et al Reference Tyrer, Gordon, Nourmand, Lawrence, Curran and Southgate23 that showed no overall difference in admissions following the introduction of a new CRHT team compared with a control service, but rather a change in the composition of admissions. Although the new CRHT team prevented informal admissions, this was accompanied by an increase in compulsory admissions that more than cancelled out the gain in preventing admissions. Unfortunately, we were not able to separate out compulsory and informal admissions in our study since there was erratic variation in data on compulsory admissions. Reference Glover, Arts and Babu9 This would be a useful analysis for future research.

There are a number of possible explanations for our results. First, PCTs who are pro-active in taking steps to lower admissions may pre-emptively introduce other policies to reduce admissions alongside the introduction of CRHT. There is evidence to suggest that the introduction of some CRHT teams has corresponded with simultaneous reductions in bed numbers. Reference Tyrer, Gordon, Nourmand, Lawrence, Curran and Southgate23 This may explain why PCTs with lower admissions were more likely to introduce the policy. Second, there is evidence of a lack of gatekeeping function by CRHT teams, with only around half of all admissions being assessed by CRHT staff 21 and only 68% of teams claiming that they act as the gatekeeper. Reference Onyett, Linde, Glover, Floyd, Bradley and Middleton24 These implementation difficulties may have reduced teams' abilities to prevent admissions. Third, our data are at PCT level, which might dilute the analysis of the impact of CRHT teams that are at a much smaller geographical level. Since PCT boundaries do not overlap with CRHT teams' catchment areas, our analysis might give rise to problems of spatial autocorrelation. Finer gradations of team geography and admissions might reveal important effects of the policy. This could, in principle, be explored because hospital episode statistics data provide geographical markers for small areas such as lower super output area codes. Admissions could therefore be aggregated up to CRHT team level and matched specifically with team characteristics. The use of smaller area statistics would provide a much more precise analysis of the CRHT teams' catchment areas and their impact on hospital admissions and explore local clustering effects as suggested by Glover et al. Reference Glover, Arts and Babu8 However, teams' geographic boundaries are not very clear-cut, even in the present day, with many overlaps, as well as many changes over time. Thus it seems likely that a higher level of geography such as the PCT level may in fact be the best available for this sort of analysis since a consistent unit of analysis is extremely important, even if it may mask some imperfections in CRHT team's catchment areas.

There is therefore scope for future research on all the various aspects of the CRHT policy package in order to properly evaluate it and maximise its impact. Reference Johnson, Needle, Bindman and Thornicroft5

Funding

R.J. holds a post-doctoral fellowship from the Department of Health's R&D Programme on performance measurement in mental health services. E.B. undertook this work with R.J. at the Centre for Health Economics at the University of York during her MSc placement in 2009.

Acknowledgements

We are extremely grateful to Gyles Glover from the North East Public Health Observatory for Mental Health for providing the data for this re-analysis.

eLetters

No eLetters have been published for this article.